Microsoft Azure support two different kind of Load Balancer configurations. For all internet traffic coming from outside into the Azure you will have to use so called “Internet Facing Load balancer”.

All traffic between virtual machines hosted in azure is controlled by “Internal Load balancer”. Currently, there are 3 Load Balancers in Azure:

- Azure Load Balancer

- Application Gateway

- Traffic Manager

Azure Load Balancer

This kind of load balancer supports internet-facing LB and internal LB. It is implemented at Layer 4 and supports any application protocol. It is usually used in conjunction with Azure VNets.

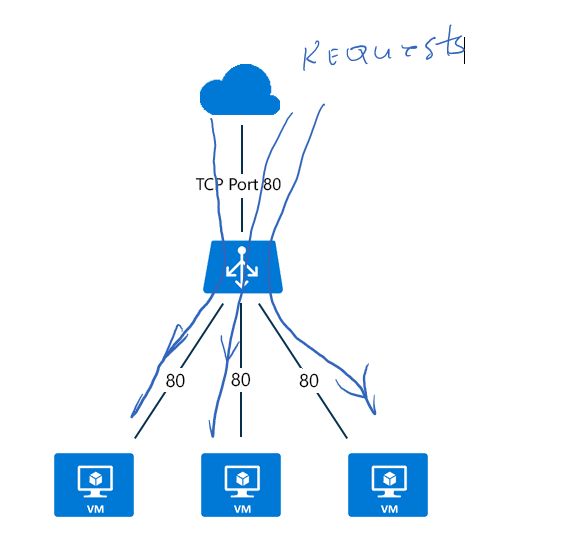

IF-LB uses so called hash-based distribution algorithm to distribute incoming public traffic as shown at the picture below:

The picture shows that traffic is distributed from publish source to internal (in Azure) destination, but it doesn’t describe how.

This LB supports two different algorithms to distribute the traffic. They are also called distribution modes.

Currently are supported two distribution modes:

- Hash Based Distribution Mode

- IP Affinity Distribution Mode

Hash Based Distribution Mode

It uses (by default) a hash composed over 5 variables:

- Source IP

- Source Port

- Destination IP

- Destination port

- Protocol type

IP Affinity Distribution Mode

IP Affinity mode sticks the session from source to destination. If can be configured to use Source-UP and Destination-IP to set the sticky session. Once the session is established, LB makes sure that all traffic is routed to the same destination.

In some scenarios affinity mode can be set to use also port number as 3th parameter for affinity session.

Application Gateway

Application Gateway is a service, which represents so called Application Delivery Controller as a service. It is a gateway with bunch of features in front of some application, which is running behind some dedicated IP address. Because it acts in front of application, it supports various load-balancing features at application level 7. Note that Azure Load balancer is working on lower-level 4.

Application Gateway supports round robin distribution, cookie-based session affinity, URL path-based routing, and the ability to host multiple websites behind a single gateway endpoint.

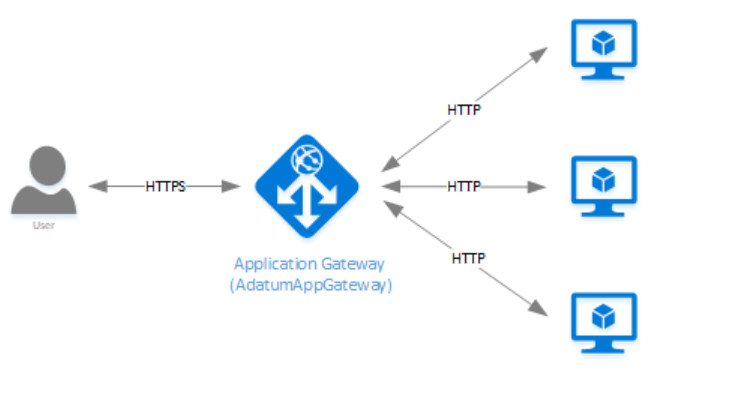

From the architecture point of view, gateway looks same as LB (se previous picture).

But note, that is is running on higher level. Incoming traffic is now HTTP/S and not some proprietary TCP or UDP protocol. Application Gateway is used for load balancing of HTTP, HTTP/S and WebSocket traffic. Because gateway knows everything about application protocols it can provide protocol dedicated features. For example,, this can be SSL offloading (SSL decryption and termination, then routing to application) or URL-based routing.

Such features are not supported by Load Balancer.

It is also remarkable that gateway can set policy for using of dedicated CipherSuite.

More over it provides Web Application Firewall as integrative part. Such firewalls protect an application from set of different vulnerabilities defined by OWASP rules. Some of rules are: SQL Injection , Cross Site Scripting , HTTP Protocol Violations and many others.

For more information about Application Gateway take a look here.

Traffic Manager

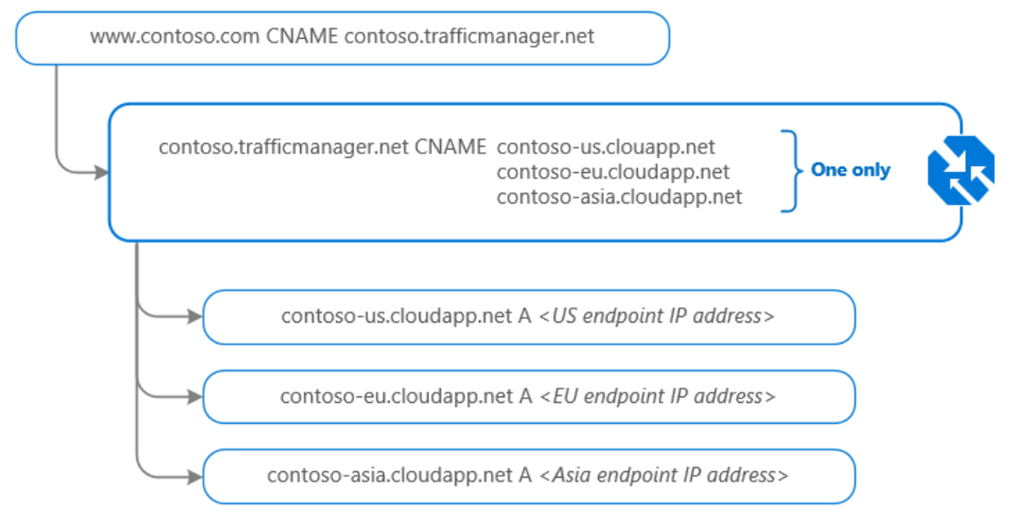

Unlike to Load Balancer and Application Gateway, Traffic Manager uses DNS Domain Name System) to direct requests. Requests can be directed to various endpoints like VMs, WebApps, Cloud Services or some external services (from Azure point of view). Following picture shows how Traffic Manager looks up the DNS in request and chose some of destination endpoints based on set of routing method rules.

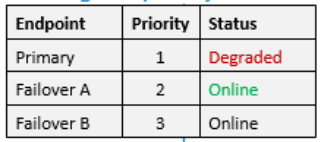

Core of Traffic Manager is Distribution of traffic according to one of traffic routing methods. For example, if you want to route all your traffic to a single endpoint, but you want to provide a failover endpoint, you will use so called priority routing. If you have 3 destinations, priority routing will chose the destination with highest priority as long it is online. If Primary fails, then next would be Node A, because priority 2 is higher than 3

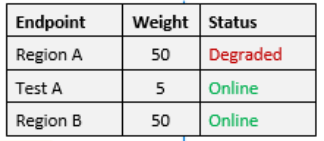

If you want to evenly distribute traffic, you will use weighted routing. In following example traffic will ve evenly distributed to Nodes A and B.

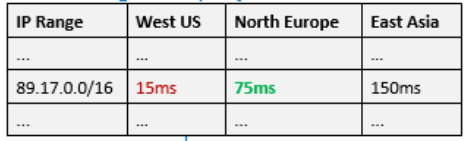

If your application is installed on different locations some kind of performance-based routing measured by DNS queries might be useful.

Traffic Manager looks up the source IP address of the incoming DNS request in the Internet Latency Table. Traffic Manager chooses an available endpoint in the Azure datacenter that has the lowest latency for that IP address range, then returns that endpoint in the DNS response.

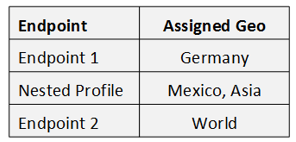

Last, but not leas, you can use routing dependent on geographic region. Depending on from where request do come from, you can chose dedicated endpoints.

For more information about Traffic Manager take a look here.